Teaching an LLM to Spell: The Power of Targeted Fine-Tuning

By Gemma Lara Savill

Published at April 26, 2026

I have recently completed a new project as part of the Udacity Generative AI Nanodegree that has been particularly insightful regarding the nature of language models. The challenge was seemingly simple but technically profound: teaching an LLM to spell correctly.

This project brought back a memory from the early days of mainstream LLMs. I remember being at home, trying to use early models to solve a crossword puzzle. We needed a word formed from a specific set of letters, and the model simply could not get it right. At the time, we dismissed it as a lack of maturity in the technology—after all, those were the days of GPT-2—and I never gave it a second thought. It wasn't until I started this project that I truly understood the underlying logic and why those early failures occurred.

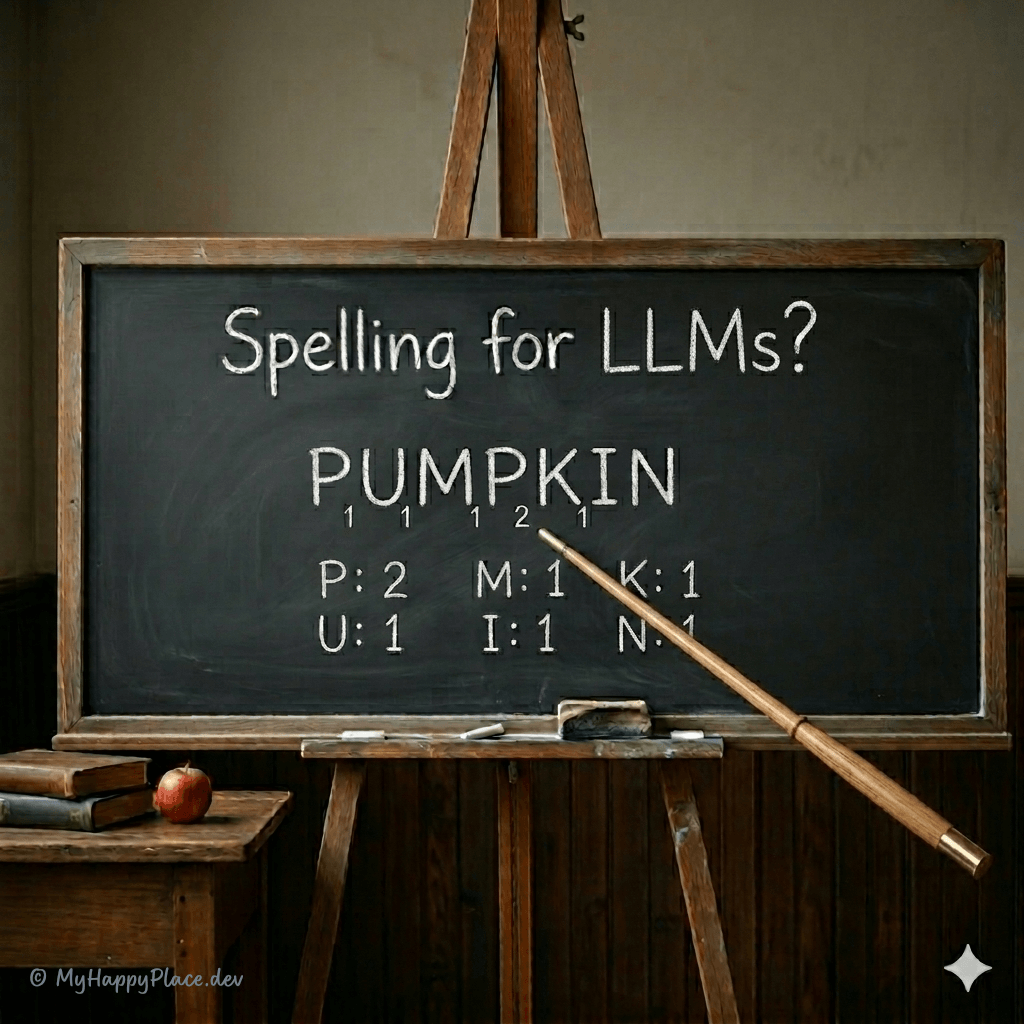

The Problem: Why do LLMs struggle with spelling?

We are often surprised when models capable of complex reasoning fail at simple orthography. The answer lies in tokenization. As I explored in my previous post on fine-tuning DistilBERT, models do not read individual letters; they process "tokens."

A word like "tokenization" might be a single numerical identifier for a model, which causes it to lose the granular perspective of the characters that compose it. This makes tasks like spelling correction or character-level manipulation naturally difficult for transformer-based architectures.

The Shift: From Correct Answers to Reward Systems

In my previous project, I explored how LoRA (Low-Rank Adaptation) could specialize a model for classification. But while supervised learning is powerful, it often fails when the task requires granular, step-by-step logic—like counting the occurrences of a letter in a word.

Using Unsloth for efficiency, I configured the LoRA adapters to target both the attention and MLP layers of the model, providing a balance between memory usage and reasoning capability:

model = FastLanguageModel.get_peft_model(

model,

r=64, # LoRA rank

target_modules=[

"q_proj", "k_proj", "v_proj", "o_proj", # Attention layers

"gate_proj", "up_proj", "down_proj" # MLP layers

],

lora_alpha=64,

use_gradient_checkpointing="unsloth",

)To solve this, I’ve moved beyond traditional fine-tuning into the realm of Reinforcement Learning using GRPO (Group Relative Policy Optimization). Instead of telling the model exactly what to say (Supervised Learning), I've built a reward system that incentivizes the right behaviors. This is known as Reward Shaping.

Teaching "Reasoning", not just "Spelling"

In this project, I developed five distinct reward functions to guide a Qwen 2.5 3B model. One of the most critical functions is the correct_answer_reward_func, which evaluates if the model's final output—extracted from its "thought tags"—matches the expected count:

def correct_answer_reward_func(prompts, completions, counts, **kwargs) -> list[float]:

"""Reward the final answer if it is exactly correct."""

responses = [completion[0]["content"] for completion in completions]

extracted_responses = [extract_xml_answer(r) for r in responses]

return [

2.0 if str(r) == str(a) else -1.0

for r, a in zip(extracted_responses, counts)

]The goal was to teach the model to use a Chain-of-Thought (CoT) approach, "thinking out loud" inside specific tags before providing the final answer:

- Format Reward: Does the model use the required

<reasoning>and<answer>tags? - Spelling Reward: Does it correctly break the word down letter-by-letter?

- Logical Consistency: Is the running count accurate at each step of the reasoning?

- Final Accuracy: Is the final count correct?

The result is a model that stops guessing and starts reasoning. By rewarding the process and not just the result, the model learns to avoid "broken windows" in its logic.

Conclusion

This project reinforces my belief that the next frontier of AI isn't just bigger models, but smarter training loops. By defining clear "team agreements" for the model via reward functions, we can achieve high-level reasoning and robustness even in smaller, efficient models.

Whether we are classifying global news or simply teaching a model to spell, parameter-efficient fine-tuning combined with reinforcement learning remains a powerful methodology for modern software craftsmanship.